Share this

Cinchy Included in 2021 Trends in Data Management on G2

December 10, 2020

Originally published on G2 on December 8th, 2020

This post is part of G2's 2021 digital trends series. Read more about G2’s perspective on digital transformation trends in an introduction from Michael Fauscette, G2's chief research officer and Tom Pringle, VP, market research, and additional coverage on trends identified by G2’s analysts.

Data management trends in 2021

In 2021, data-driven leaders will be reassessing their data management strategies due to the evolving technology environment. Organizations will prioritize investments in scalable data platforms to effectively secure, govern, and analyze data across business functions through a single unified platform. These platforms will provide greater control over and allow seamless access to their data, irrespective of where it resides, ultimately helping them gain valuable insights and make better business decisions.

Organizations are able to obtain a competitive edge by building expertise in data management to fuel their business strategy. New tools and technologies based on artificial intelligence (AI) and machine learning (ML) are continuously being introduced to handle the ever-evolving complexities like data diversity and disparity across environments.

Another gradual evolution in data management is the blurring line between IT and business responsibilities; organizations are no longer limited by functional boundaries, enabling enterprise-wide data collaboration and empowering stakeholders across the organization with the right data at the right time.

Let’s dig deeper into the trends that are likely to emerge in the data management space in 2021.

Rethinking data management for hybrid and multicloud strategy

PREDICTION By 2025, 95% of enterprise organizations will embrace hybrid cloud deployment. Data will see a 25% compound annual growth rate until 2025 with increased diversity and disparity.

The rise of hybrid and multicloud architecture, and continuous advances in AI and ML, is prompting the data management market to constantly evolve with intensified challenges, opportunities, and strategies. The recent partnership between two tech giants, IBM and SAP explains the movement of organizations towards hybrid cloud journey.

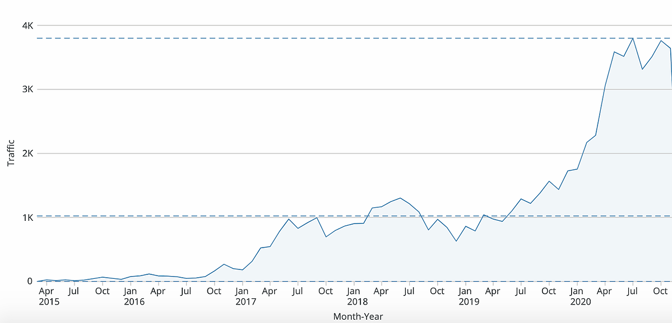

Cloud adoption has drastically increased in recent years, with 2020 accelerating the trend even further, amidst the COVID-19 pandemic. The cloud infrastructure services market growth soared in Q3 with the pandemic acting as a catalyst, fueling online demand. Companies have started moving more and more of their workloads and data to the cloud, at the same time preferring multiple cloud environments over a single cloud provider.

As enterprises accelerate their migration to the cloud, they are increasingly implementing a multicloud strategy. 93% of enterprises have a multicloud strategy and 87% have a hybrid cloud strategy, according to the State of Cloud Report 2020 by Flexera.

A multicloud strategy enables organizations to maintain a hybrid cloud environment that provides a combination of both security and specialized capabilities like integrated ML capabilities. The most security-focused workloads and data can be kept in the private cloud while regular data and applications can run on cost-effective public cloud networks. This type of infrastructure is proving to be a successful model for organizations as they offer a rich set of cloud options which helps in both optimizing returns on cloud investments and lower vendor lock-in.

One of the major challenges emerging with the increasing rate in multicloud or hybrid cloud adoption is managing data across multiple systems and locations within organizations. Businesses will see themselves somewhere between being 100% on-premises to cloud on the deployment spectrum.

In a real-time hybrid cloud scenario, most organizations will use a mix of multicloud and on-premises deployment. To overcome the challenges related to this evolving landscape, organizations will adopt end-to-end hybrid data management platforms to deliver greater visibility and control over their data across cloud, hybrid, and on-premises environments while ensuring data security and governance.

Giants in the data management space, such as IBM define a modern hybrid data management platform as one that should ensure complete accessibility irrespective of source or format, support various deployment options, eliminate restrictions and democratize access to data, and embrace the power of intelligent analytics with embedded ML.

Deciphering the emerging technology in data management: data fabric

PREDICTION: 2021 will see a rise in the emerging data fabric technology.

Data no longer resides in a single environment; it’s scattered across on-premises and cloud environments, which indicates that businesses are moving into a hybrid world. With the exponential growth in data formats, sources, and deployments across organizations, businesses are constantly looking for ways to best optimize data assets that live within existing on-premises legacy systems.

Data fabric can be thought of as a weave that is stretched over a large space that connects multiple locations, types, and sources of data, with methods for accessing that data. Data fabric technology is designed to solve complexities related to managing data disparity in both on-premises and cloud environments through a single unified platform.

Data fabric is an emerging term in the data technology industry. Organizations like Cinchy, a Toronto-based data collaboration company, are trying to educate vendors on the potential of this technology. The company also recently secured a Series A funding round to support the growing demand of data fabric technology. The focus of these companies is to create a data environment that provides centralized access via a single, unified view of an organization's data that inherits access and governance restrictions, irrespective of the data’s format or location.

Data collaboration technology applied in a data fabric allows users to accelerate and streamline intensive ETL processes by easily connecting to different data sources and eliminating time spent moving and copying data between applications through an interconnected architecture. Data professionals believe that with increasingly distributed, dynamic, and diverse data, businesses need frictionless access and sharing of data and this is going to drive the rise of data fabric technology.

Organizations continue to embrace AI and ML to fuel data management strategies

Augmented data management (ADM)

Data scientists and data engineers spend majority of their time manually accessing, preparing, and managing data. ADM is the application of AI/ML technologies in the automation of manual tasks in data management processes.

PREDICTION: By 2022, 80% of mundane data management tasks will be automated through ADM, allowing data scientists to focus on building development models to gain advanced insights into data.

ADM will help businesses simplify, optimize, and automate operations related to data quality, metadata management, master data management, database management systems, etc., making them self configuring and self tuning. An AI/ML augmented engine offers smart recommendations to data professionals, enabling them to select from multiple prelearned models of solutions to a specific data task. Automating manual data tasks within organizations will yield higher productivity and increased democracy among the data user community.

Application of ADM in data catalogs

The need for ML-augmented metadata catalogs will continue to rise in 2021. Considering how increasingly wide and distributed datasets are, there are significant challenges arising in inventorying and synthesizing the data for company-wide use. Today, searching and tracing the journey of data is becoming increasingly important for effective analytics.

Machine learning data catalogs were sold like hotcakes in 2020 and this trend will continue to grow. ML automates the mundane aspects of understanding the data and applying policies, business rules, tags, and classifications in data catalogs.

Proliferation of knowledge graphs

Graph databases are relatively old technology. Tech giants like Google, Facebook, and Twitter have been using knowledge graphs to understand their customers, business decisions, and product lines for ages. Knowledge graphs are composed of an underlying graph database to store the data and a reasoning layer to search and derive insights from the data.

This year, G2 saw a 119% spike in the Graph Databases category and recorded the highest growth during the pandemic. It may be inferred that graph databases proved to be a really valuable tool in modeling the spread of coronavirus. Pharma organizations like AstraZenenca used graph algorithms to find patients that had specific journey types and patterns, and then find others that were close and similar.

The ability of knowledge graphs to detangle and analyze complex heterogeneous data relationships to discover meaningful relationships has increased the productivity of data scientists. It also facilitates users’ ability to continuously learn and grow organically with the help of ontologies.

Graph is proving to be one of the fastest ways to connect data, especially when dealing with complex or large volumes of disparate data. Implementing a knowledge graph in combination with AI and ML algorithms will help instill context and rationale into data. Major uses cases of graph processing will be seen in fraud detection, social network analysis, and in the healthcare space.

Key takeaways

Organizations are increasingly adopting multicloud strategies and moving their workloads and data in the cloud. Data will be housed somewhere between both on-premises and in the cloud. Managing this scattered data across multiple sources, formats, and deployments is a challenge organizations will realize in 2021. This will lead businesses to reimagine their data management strategy to adopt a hybrid data management approach with the aim to connect and manage data irrespective of where it resides.

Organizations will be seen building scalable data platforms powered by AI/ML technology to cater to the ever-evolving technological landscape.

Share this

- January 2024 (1)

- October 2023 (1)

- August 2023 (2)

- July 2023 (1)

- June 2023 (3)

- April 2023 (2)

- March 2023 (8)

- February 2023 (9)

- January 2023 (6)

- December 2022 (1)

- November 2022 (1)

- October 2022 (3)

- September 2022 (3)

- July 2022 (2)

- June 2022 (1)

- February 2022 (2)

- January 2022 (3)

- December 2021 (2)

- October 2021 (1)

- September 2021 (1)

- December 2020 (1)

- May 2020 (4)

- April 2020 (1)

- December 2019 (1)

- November 2019 (1)

- October 2019 (1)

- September 2019 (3)

- August 2019 (1)

- November 2018 (1)